Azure App Cloud Discovery is a seriously cool piece of technology. Being able to scan your entire computer estate for cloud SaaS applications in either a targeted, or catch-all manner can really help discover the ‘Shadow IT’ going on in your environment. Nowadays, users not having local admin rights won’t necessarily stop them from using cloud SaaS apps in any way which is going to increase their productivity. Users don’t generally think about the impact of using such applications, and the potential for data leakage.

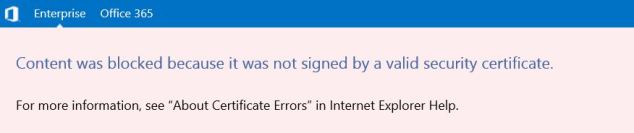

But, as with lots of Microsoft’s other cloud technologies which are being launched left, right and centre at the moment, the Enterprise isn’t catered for as it might hope. Most Enterprise IT departments leverage some kind of web filtering, or proxying. This may be using transparent proxying, in which case you can count your blessings as Cloud App Discovery will work just fine. If you are explicitly defining a proxy in your internet settings, then you can get around that by adding particular registry keys. However if you are using a PAC file to control access to the internet, then unfortunately Cloud App Discovery will not work for you. This is a shame as it is, in my opinion, the best way to approach web proxying in an Enterprise, but that’s another story. From what I have heard, a feature is in the works which will allow you to configure Cloud App Discovery agents to log their findings to an internal data collector. This data collector can sit on a local server and then upload data to Azure on your behalf, which is a much more elegant solution to the problem of data collection from multiple machines. However as far as I know, this feature is not available yet. I’ll be keeping my ear to the ground and will let you know if this changes.

In the meantime, if you are desperate to get your data collection up and running in the meantime, you could change to explicitly defined proxying, and configure registry settings for your clients as per the following MS article:

https://azure.microsoft.com/en-gb/documentation/articles/active-directory-cloudappdiscovery-registry-settings-for-proxy-services/

Cloud App Discovery is a feature of Azure Active Directory Premium, a toolkit designed to take your Azure Active Directory to new, cloudier heights. Azure AD Premium can be bought standalone, or comes bundled with the Enterprise Mobility Suite. I would highly recommend it to Office 365 customers as it can give you and your users some great new features which can help make your Azure AD the best it can be!

Edit: It looks like PAC file support has been added rather surreptitiously. No announcement was made, and the KB articles haven’t been updated. I happened to check the Change Log today and Release 1.0.10.1 includes an option to tweak your PAC file to support Cloud App Discovery. https://social.technet.microsoft.com/wiki/contents/articles/24616.cloud-app-discovery-agent-changelog.aspx

Alternatively, if you use a PAC file (Proxy Auto Configuration) to manage proxy configuration, please tweak your file to add https://policykeyservice.dc.ad.msft.net/ as an exception URL.

I’m yet to test this myself but it looks promising!